Where to get Hadoop Winutils

If you are getting something like this when launching spark on Windows:

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/C:/Users/me/scoop/apps/spark/current/jars/spark-unsafe_2.12-3.2.1.jar) to constructor java.nio.DirectByteBuffer(long,int)

WARNING: Please consider reporting this to the maintainers of org.apache.spark.unsafe.Platform

WARNING: Use --illegal-access=warn to enable warnings of further illegal reflective access operations

WARNING: All illegal access operations will be denied in a future release

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

22/03/16 09:51:37 ERROR SparkContext: Error initializing SparkContext.

java.lang.reflect.InvocationTargetException

at java.base/jdk.internal.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at java.base/jdk.internal.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.base/java.lang.reflect.Constructor.newInstance(Constructor.java:490)

at org.apache.spark.executor.Executor.addReplClassLoaderIfNeeded(Executor.scala:909)

at org.apache.spark.executor.Executor.<init>(Executor.scala:160)

at org.apache.spark.scheduler.local.LocalEndpoint.<init>(LocalSchedulerBackend.scala:64)

at org.apache.spark.scheduler.local.LocalSchedulerBackend.start(LocalSchedulerBackend.scala:132)

at org.apache.spark.scheduler.TaskSchedulerImpl.start(TaskSchedulerImpl.scala:220)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:581)

at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2690)

at org.apache.spark.sql.SparkSession$Builder.$anonfun$getOrCreate$2(SparkSession.scala:949)

at scala.Option.getOrElse(Option.scala:189)

at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:943)

at org.apache.spark.repl.Main$.createSparkSession(Main.scala:106)

at $line3.$read$$iw$$iw.<init>(<console>:15)

at $line3.$read$$iw.<init>(<console>:42)

at $line3.$read.<init>(<console>:44)

at $line3.$read$.<init>(<console>:48)

at $line3.$read$.<clinit>(<console>)

at $line3.$eval$.$print$lzycompute(<console>:7)

at $line3.$eval$.$print(<console>:6)

at $line3.$eval.$print(<console>)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at scala.tools.nsc.interpreter.IMain$ReadEvalPrint.call(IMain.scala:747)

at scala.tools.nsc.interpreter.IMain$Request.loadAndRun(IMain.scala:1020)

at scala.tools.nsc.interpreter.IMain.$anonfun$interpret$1(IMain.scala:568)

at scala.reflect.internal.util.ScalaClassLoader.asContext(ScalaClassLoader.scala:36)

at scala.reflect.internal.util.ScalaClassLoader.asContext$(ScalaClassLoader.scala:116)

at scala.reflect.internal.util.AbstractFileClassLoader.asContext(AbstractFileClassLoader.scala:41)

at scala.tools.nsc.interpreter.IMain.loadAndRunReq$1(IMain.scala:567)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:594)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:564)

at scala.tools.nsc.interpreter.IMain.$anonfun$quietRun$1(IMain.scala:216)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:206)

at scala.tools.nsc.interpreter.IMain.quietRun(IMain.scala:216)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$2(SparkILoop.scala:83)

at scala.collection.immutable.List.foreach(List.scala:431)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$1(SparkILoop.scala:83)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.savingReplayStack(ILoop.scala:97)

at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:83)

at org.apache.spark.repl.SparkILoop.$anonfun$process$4(SparkILoop.scala:165)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.$anonfun$mumly$1(ILoop.scala:166)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:206)

at scala.tools.nsc.interpreter.ILoop.mumly(ILoop.scala:163)

at org.apache.spark.repl.SparkILoop.loopPostInit$1(SparkILoop.scala:153)

at org.apache.spark.repl.SparkILoop.$anonfun$process$10(SparkILoop.scala:221)

at org.apache.spark.repl.SparkILoop.withSuppressedSettings$1(SparkILoop.scala:189)

at org.apache.spark.repl.SparkILoop.startup$1(SparkILoop.scala:201)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:236)

at org.apache.spark.repl.Main$.doMain(Main.scala:78)

at org.apache.spark.repl.Main$.main(Main.scala:58)

at org.apache.spark.repl.Main.main(Main.scala)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:955)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1043)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1052)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.net.URISyntaxException: Illegal character in path at index 30: spark://192.168.1.228:59852/C:\classes

at java.base/java.net.URI$Parser.fail(URI.java:2915)

at java.base/java.net.URI$Parser.checkChars(URI.java:3086)

at java.base/java.net.URI$Parser.parseHierarchical(URI.java:3168)

at java.base/java.net.URI$Parser.parse(URI.java:3116)

at java.base/java.net.URI.<init>(URI.java:600)

at org.apache.spark.repl.ExecutorClassLoader.<init>(ExecutorClassLoader.scala:57)

... 70 more

22/03/16 09:51:37 ERROR Utils: Uncaught exception in thread main

java.lang.NullPointerException

at org.apache.spark.scheduler.local.LocalSchedulerBackend.org$apache$spark$scheduler$local$LocalSchedulerBackend$$stop(LocalSchedulerBackend.scala:173)

at org.apache.spark.scheduler.local.LocalSchedulerBackend.stop(LocalSchedulerBackend.scala:144)

at org.apache.spark.scheduler.TaskSchedulerImpl.stop(TaskSchedulerImpl.scala:927)

at org.apache.spark.scheduler.DAGScheduler.stop(DAGScheduler.scala:2567)

at org.apache.spark.SparkContext.$anonfun$stop$12(SparkContext.scala:2086)

at org.apache.spark.util.Utils$.tryLogNonFatalError(Utils.scala:1442)

at org.apache.spark.SparkContext.stop(SparkContext.scala:2086)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:677)

at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2690)

at org.apache.spark.sql.SparkSession$Builder.$anonfun$getOrCreate$2(SparkSession.scala:949)

at scala.Option.getOrElse(Option.scala:189)

at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:943)

at org.apache.spark.repl.Main$.createSparkSession(Main.scala:106)

at $line3.$read$$iw$$iw.<init>(<console>:15)

at $line3.$read$$iw.<init>(<console>:42)

at $line3.$read.<init>(<console>:44)

at $line3.$read$.<init>(<console>:48)

at $line3.$read$.<clinit>(<console>)

at $line3.$eval$.$print$lzycompute(<console>:7)

at $line3.$eval$.$print(<console>:6)

at $line3.$eval.$print(<console>)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at scala.tools.nsc.interpreter.IMain$ReadEvalPrint.call(IMain.scala:747)

at scala.tools.nsc.interpreter.IMain$Request.loadAndRun(IMain.scala:1020)

at scala.tools.nsc.interpreter.IMain.$anonfun$interpret$1(IMain.scala:568)

at scala.reflect.internal.util.ScalaClassLoader.asContext(ScalaClassLoader.scala:36)

at scala.reflect.internal.util.ScalaClassLoader.asContext$(ScalaClassLoader.scala:116)

at scala.reflect.internal.util.AbstractFileClassLoader.asContext(AbstractFileClassLoader.scala:41)

at scala.tools.nsc.interpreter.IMain.loadAndRunReq$1(IMain.scala:567)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:594)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:564)

at scala.tools.nsc.interpreter.IMain.$anonfun$quietRun$1(IMain.scala:216)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:206)

at scala.tools.nsc.interpreter.IMain.quietRun(IMain.scala:216)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$2(SparkILoop.scala:83)

at scala.collection.immutable.List.foreach(List.scala:431)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$1(SparkILoop.scala:83)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.savingReplayStack(ILoop.scala:97)

at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:83)

at org.apache.spark.repl.SparkILoop.$anonfun$process$4(SparkILoop.scala:165)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.$anonfun$mumly$1(ILoop.scala:166)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:206)

at scala.tools.nsc.interpreter.ILoop.mumly(ILoop.scala:163)

at org.apache.spark.repl.SparkILoop.loopPostInit$1(SparkILoop.scala:153)

at org.apache.spark.repl.SparkILoop.$anonfun$process$10(SparkILoop.scala:221)

at org.apache.spark.repl.SparkILoop.withSuppressedSettings$1(SparkILoop.scala:189)

at org.apache.spark.repl.SparkILoop.startup$1(SparkILoop.scala:201)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:236)

at org.apache.spark.repl.Main$.doMain(Main.scala:78)

at org.apache.spark.repl.Main$.main(Main.scala:58)

at org.apache.spark.repl.Main.main(Main.scala)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:955)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1043)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1052)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

22/03/16 09:51:37 WARN MetricsSystem: Stopping a MetricsSystem that is not running

22/03/16 09:51:37 ERROR Main: Failed to initialize Spark session.

java.lang.reflect.InvocationTargetException

at java.base/jdk.internal.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at java.base/jdk.internal.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.base/java.lang.reflect.Constructor.newInstance(Constructor.java:490)

at org.apache.spark.executor.Executor.addReplClassLoaderIfNeeded(Executor.scala:909)

at org.apache.spark.executor.Executor.<init>(Executor.scala:160)

at org.apache.spark.scheduler.local.LocalEndpoint.<init>(LocalSchedulerBackend.scala:64)

at org.apache.spark.scheduler.local.LocalSchedulerBackend.start(LocalSchedulerBackend.scala:132)

at org.apache.spark.scheduler.TaskSchedulerImpl.start(TaskSchedulerImpl.scala:220)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:581)

at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2690)

at org.apache.spark.sql.SparkSession$Builder.$anonfun$getOrCreate$2(SparkSession.scala:949)

at scala.Option.getOrElse(Option.scala:189)

at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:943)

at org.apache.spark.repl.Main$.createSparkSession(Main.scala:106)

at $line3.$read$$iw$$iw.<init>(<console>:15)

at $line3.$read$$iw.<init>(<console>:42)

at $line3.$read.<init>(<console>:44)

at $line3.$read$.<init>(<console>:48)

at $line3.$read$.<clinit>(<console>)

at $line3.$eval$.$print$lzycompute(<console>:7)

at $line3.$eval$.$print(<console>:6)

at $line3.$eval.$print(<console>)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at scala.tools.nsc.interpreter.IMain$ReadEvalPrint.call(IMain.scala:747)

at scala.tools.nsc.interpreter.IMain$Request.loadAndRun(IMain.scala:1020)

at scala.tools.nsc.interpreter.IMain.$anonfun$interpret$1(IMain.scala:568)

at scala.reflect.internal.util.ScalaClassLoader.asContext(ScalaClassLoader.scala:36)

at scala.reflect.internal.util.ScalaClassLoader.asContext$(ScalaClassLoader.scala:116)

at scala.reflect.internal.util.AbstractFileClassLoader.asContext(AbstractFileClassLoader.scala:41)

at scala.tools.nsc.interpreter.IMain.loadAndRunReq$1(IMain.scala:567)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:594)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:564)

at scala.tools.nsc.interpreter.IMain.$anonfun$quietRun$1(IMain.scala:216)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:206)

at scala.tools.nsc.interpreter.IMain.quietRun(IMain.scala:216)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$2(SparkILoop.scala:83)

at scala.collection.immutable.List.foreach(List.scala:431)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$1(SparkILoop.scala:83)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.savingReplayStack(ILoop.scala:97)

at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:83)

at org.apache.spark.repl.SparkILoop.$anonfun$process$4(SparkILoop.scala:165)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.$anonfun$mumly$1(ILoop.scala:166)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:206)

at scala.tools.nsc.interpreter.ILoop.mumly(ILoop.scala:163)

at org.apache.spark.repl.SparkILoop.loopPostInit$1(SparkILoop.scala:153)

at org.apache.spark.repl.SparkILoop.$anonfun$process$10(SparkILoop.scala:221)

at org.apache.spark.repl.SparkILoop.withSuppressedSettings$1(SparkILoop.scala:189)

at org.apache.spark.repl.SparkILoop.startup$1(SparkILoop.scala:201)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:236)

at org.apache.spark.repl.Main$.doMain(Main.scala:78)

at org.apache.spark.repl.Main$.main(Main.scala:58)

at org.apache.spark.repl.Main.main(Main.scala)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:955)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1043)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1052)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.net.URISyntaxException: Illegal character in path at index 30: spark://192.168.1.228:59852/C:\classes

at java.base/java.net.URI$Parser.fail(URI.java:2915)

at java.base/java.net.URI$Parser.checkChars(URI.java:3086)

at java.base/java.net.URI$Parser.parseHierarchical(URI.java:3168)

at java.base/java.net.URI$Parser.parse(URI.java:3116)

at java.base/java.net.URI.<init>(URI.java:600)

at org.apache.spark.repl.ExecutorClassLoader.<init>(ExecutorClassLoader.scala:57)

... 70 more

22/03/16 09:51:37 ERROR Utils: Uncaught exception in thread Thread-0

java.lang.ExceptionInInitializerError

at org.apache.spark.executor.Executor.stop(Executor.scala:333)

at org.apache.spark.executor.Executor.$anonfun$stopHookReference$1(Executor.scala:76)

at org.apache.spark.util.SparkShutdownHook.run(ShutdownHookManager.scala:214)

at org.apache.spark.util.SparkShutdownHookManager.$anonfun$runAll$2(ShutdownHookManager.scala:188)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at org.apache.spark.util.Utils$.logUncaughtExceptions(Utils.scala:2019)

at org.apache.spark.util.SparkShutdownHookManager.$anonfun$runAll$1(ShutdownHookManager.scala:188)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.util.Try$.apply(Try.scala:213)

at org.apache.spark.util.SparkShutdownHookManager.runAll(ShutdownHookManager.scala:188)

at org.apache.spark.util.SparkShutdownHookManager$$anon$2.run(ShutdownHookManager.scala:178)

at org.apache.hadoop.util.ShutdownHookManager$1.run(ShutdownHookManager.java:54)

Caused by: java.lang.NullPointerException

at org.apache.spark.shuffle.ShuffleBlockPusher$.<init>(ShuffleBlockPusher.scala:465)

at org.apache.spark.shuffle.ShuffleBlockPusher$.<clinit>(ShuffleBlockPusher.scala)

... 12 more

22/03/16 09:51:37 WARN ShutdownHookManager: ShutdownHook '' failed, java.lang.ExceptionInInitializerError

java.lang.ExceptionInInitializerError

at org.apache.spark.executor.Executor.stop(Executor.scala:333)

at org.apache.spark.executor.Executor.$anonfun$stopHookReference$1(Executor.scala:76)

at org.apache.spark.util.SparkShutdownHook.run(ShutdownHookManager.scala:214)

at org.apache.spark.util.SparkShutdownHookManager.$anonfun$runAll$2(ShutdownHookManager.scala:188)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at org.apache.spark.util.Utils$.logUncaughtExceptions(Utils.scala:2019)

at org.apache.spark.util.SparkShutdownHookManager.$anonfun$runAll$1(ShutdownHookManager.scala:188)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.util.Try$.apply(Try.scala:213)

at org.apache.spark.util.SparkShutdownHookManager.runAll(ShutdownHookManager.scala:188)

at org.apache.spark.util.SparkShutdownHookManager$$anon$2.run(ShutdownHookManager.scala:178)

at org.apache.hadoop.util.ShutdownHookManager$1.run(ShutdownHookManager.java:54)

Caused by: java.lang.NullPointerException

at org.apache.spark.shuffle.ShuffleBlockPusher$.<init>(ShuffleBlockPusher.scala:465)

at org.apache.spark.shuffle.ShuffleBlockPusher$.<clinit>(ShuffleBlockPusher.scala)

... 12 more

then most probably your version of hadoop-winutils is wrong. Get up-to-date one here.

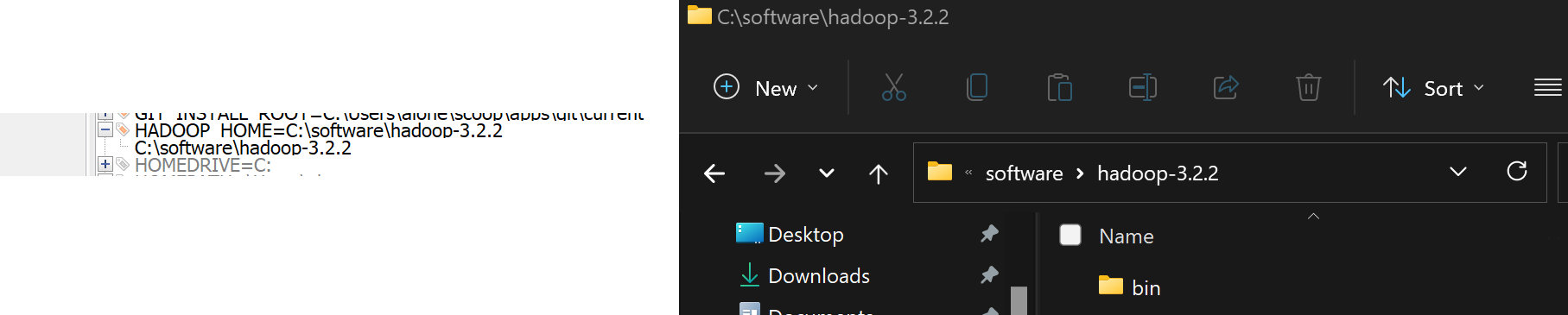

HADOOP_HOME must point to the folder containing bin.

f

To contact me, send an email anytime or leave a comment below.